Each year, the Tools Competition receives thoughtful and engaging proposals that aim to address challenges and opportunities in education from early childhood to higher education and beyond. During interactions with competitors, our team is often asked: “What can I do to set my tool or proposal apart from others?” and “How have winning teams approached key elements of the competition?”

The most successful competitors place learning engineering and research partnerships at the heart of their work and use it to drive continuous improvement, both for their product and for the field at large. While the approach can look different for every team, it often includes a strong understanding of how the data the tool generates can respond to key questions about learning and a plan for leveraging the tool as an instrument for research and experimentation.

In this case study, we highlight the work of Smart Paper, a 2021 Tools Competition winner in the K-12 Assessment Track. Smart Paper represents an important case for other competitors that are facing questions around how to prioritize scale to teacher and student users and how to make their tool and data useful for researchers in the medium and long term.

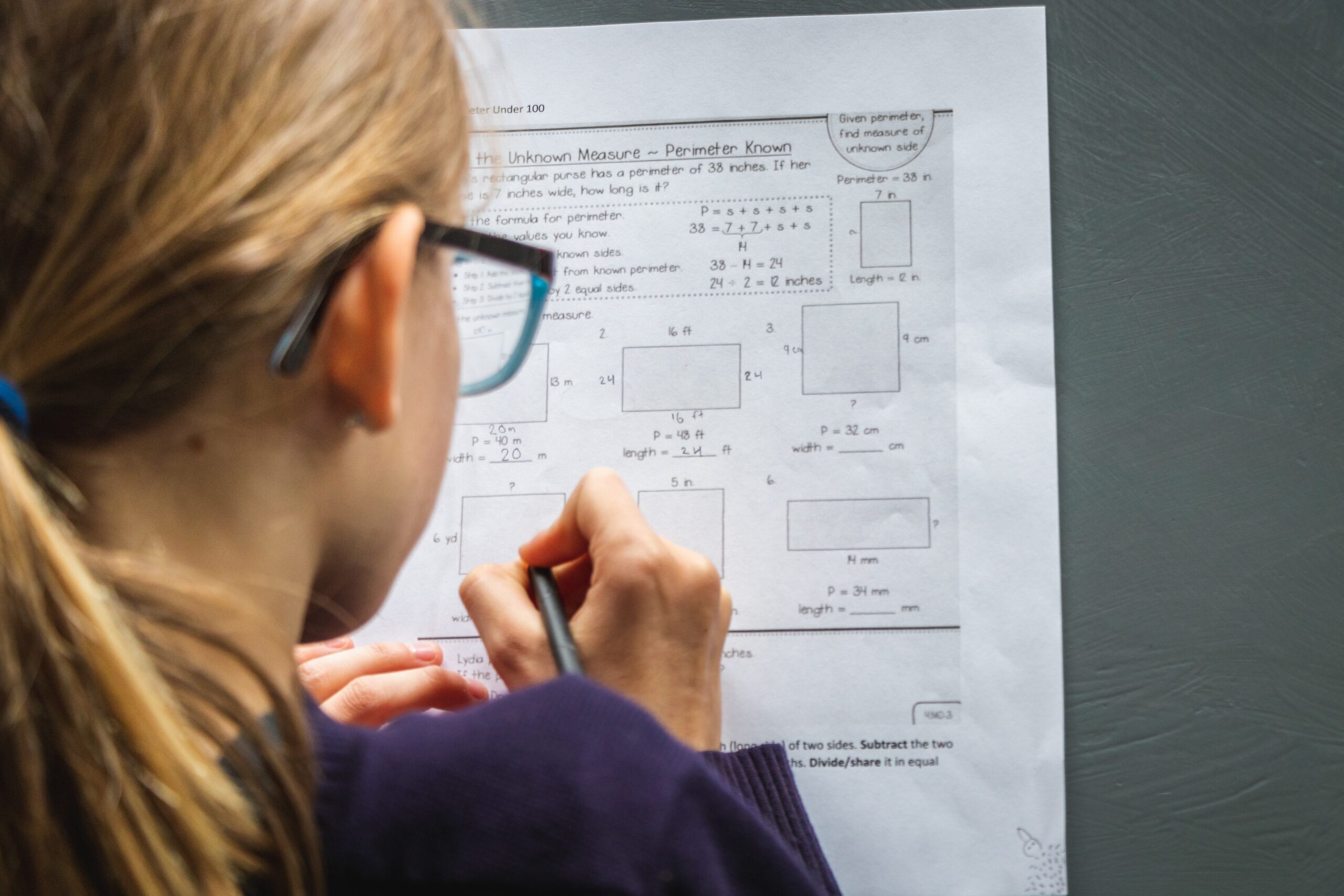

Smart Paper is an assessment technology that analyzes learning data on paper to automate the grading process and save teachers’ time. Teachers upload images of student work to Smart Paper which evaluates student responses through AI and handwriting recognition.

These images of student work make up Smart Paper’s dataset, ImageNet for Education, which provides insight into students’ problem solving processes. The data collected is used to both improve the tool itself and to drive learning science research more broadly. Ultimately, through its work, Smart Paper strives to reach every child who learns on paper, across communities and with different levels of access to technology.

We spoke to Smart Paper’s team leads Nirmal Patel and Yoshi Okamoto about their vision and purpose, and how they approached the learning engineering element of the competition.

Responses were edited for brevity and clarity.

The Tools Competition emphasizes learning engineering, rapid experimentation, and continuous improvement. These elements help teams understand if their approach works, for whom, and at what time, and are central to scaling effective products and generating high-quality data. How have you approached these elements in your work?

From the very start, we [Patel and Okamoto] shared the vision of building a technology that could reach all children across the world. We were particularly motivated to build technology for underserved or underprivileged communities, and those in low-tech or no-tech environments. This global vision drives our work.

In our experimentation and improvement processes, one of our priorities was to make the tool accessible to as many communities as possible. Since users need to upload images of student work to our tool to assess them, we focused on understanding the different levels of access to digital technology across and within India and Japan, the two planned implementation sites, and how the use of our tool may vary.

For example, In India, we found that most people used mobile devices to upload pictures of student work. In contrast, in communities with lower access to technology, people visited nearby towns to upload data.

In Japan, we found that people generally had access to a wider variety of technology, including scanners, and were able to upload data that way. Because the core idea of Smart Paper is to collect data from everyone, we knew that it was crucial to take the range of digital access into consideration.

In addition to ensuring that Smart Paper was accessible to users in different settings, we also continuously incorporated our findings into the tool, in order to maximize the value it can provide to teachers and researchers. When we first began working together, we knew we wanted to digitize paper at scale for better teaching and learning, but we didn’t know exactly what the user interface and the technology would look like. Through tests and pilots, we found that an overly complex tool wouldn’t work for users, and we simplified our interface and technology to make it as accessible to all teachers as possible. Even today, as we continue to share our tool with more people, we try to make it easier to use. This has been an important approach for us because we realize that when users feel pain points with Smart Paper, they can easily go back to other tools that they are more used to.

This continuous cycle of product development and iteration has helped us become more successful. Ultimately we would largely attribute our success to how we are responding to user needs and demands. These elements are instrumental in helping our platform grow and mature.

It is clear that Smart Paper has value for both teachers and researchers, although these user groups may have different needs. How do you structure Smart Paper and its data so that it is valuable for teachers and researchers alike?

This is an issue we frequently encounter. We always reflect on how the data we collect serves the values and interests of different groups, which aren’t always in alignment with each other.

For example, we currently have an enormous amount of data from multiple-choice questions. These multiple-choice tests don’t yield the most interesting insights for researchers because they don’t contain nuanced data on mistake patterns or thought processes, however, they are of high value to teachers because they are automatically graded and can save teachers’ time.

In an effort to collect more descriptive data on students’ problem solving processes that could be helpful to researchers, we conducted a small scale experiment utilizing problem sets with 100 students in algebra. This is unique in comparison to our other multiple choice data, because it requires students to show their work. While this assessment generated data that could be valuable to researchers, such as problem solving patterns and common misconceptions, the assessment wasn’t readily accepted in the classroom because the grading process was not as automated. Ultimately, this made it hard to scale because teachers value the efficiency of multiple choice assessments and therefore prefer what AI can grade.

Teams at the earlier stages should primarily focus on the needs and interests of teacher and student users, which will help them determine what and how to scale. We take that approach to heart and also regularly think of ways to develop a dataset that is most useful to researchers, by piloting assessments that yield exciting data in a way that still allows us to scale.

What kinds of insights can both your team and external researchers draw from your current dataset?

Although the dataset that is “interesting” to researchers (from the above-mentioned algebra experiment) is relatively small, it is significant. This is because it has helped us uncover the processes and nuances of problem-solving, rather than the legacy approach to grading which only evaluates students’ final answers. Even AI like GPT4 has not been able to accurately reproduce the most common misconceptions that we identified in this dataset, likely because machines don’t learn the way humans do. Until now, teachers would need to analyze hundreds of answer sheets to collect such data. Smart Paper is changing that, and we believe that this data is going to be very valuable.

Internally, our data is structured by assessment at the item level, and we extract relevant data to share with external researchers. This is because the vast majority of our data currently consists of responses to multiple choice questions, but the much smaller subset that comes from algebra problems that require students to show their work is what is exciting to researchers. Nonetheless, once more students take the tests that require them to show their work on paper, we will be able to provide a larger quantity of meaningful, organized data made accessible to researchers via a web interface.

In the coming years, we hope to eventually uncover the thought processes behind how students approach problem sets. From there, we hope to examine the geographical differences in how students solve problems, as well as many other patterns that will surely have tremendous potential.

You allude to some of the things that you and your team have learned as you develop and scale Smart Paper. What other challenges have you faced in your work?

For Smart Paper to scale, it is really important to have a highly accurate Optical Character Recognition (OCR) model to digitize the handwritten data from student work. Given our global vision, it can be difficult to gather all the data we need to effectively train the OCR model on the handwriting patterns of students across grade levels, schools and communities. We’re very hopeful that we are on our way to achieving this. We spent a lot of time crawling through the early data ourselves to understand what the model was or wasn’t detecting, and this thoroughness has helped us assess and improve the OCR model. Papers are everywhere, so once we have our core technology ready, we can scale quickly.

What advice do you have for other developers that hope to create and grow successful ed tech tools?

Start small and fail in small pilots. It is key to find places where you can run small pilots to ensure that your technology is working – specifically with AI because many things can go wrong. Although our earliest pilot didn’t work out, we knew that it was key to testing and ensuring the quality of our AI technology. We are also strong advocates for learning and teaching efficacy at scale. To achieve that, it’s very, very important to bring in users – not just students, but administrators and teachers – from the very beginning, and let them get excited about being a part of the user experience design. It’s crucial to bring them in for firsthand feedback because they are the actual beneficiaries of the design.